(424) LMS Killer Einstein Rebrands, In Hours, Then Dies

Plus, universities in India to limit AI use to 10%.

Subscribe below to join 5,102 (+15) other smart people who get “The Cheat Sheet.” New Issues nearly every Tuesday and Thursday.

The Cheat Sheet is free. Although, patronage through paid subscriptions is what makes this newsletter possible. Individual subscriptions start at $8 a month ($80 annual), and institutional or corporate subscriptions are $250 a year, suggested.

As always, The Cheat Sheet is verified to be human-written:

Meet the New Einstein. Gone: Brazen Cheating. For a Minute: Friendly Tutor. Now: Just Gone.

In our last issue, we introduced you to Einstein, the AI agent chatbot designed and sold to do all your academic work for you, literally it said, while you were asleep. It, and other agentic bots like it were going to kill learning management systems (LMS), I wrote.

Then, not too long after our Issue went out, a reader wrote in asking where I’d found the quotes from Einstein that were in that Issue. She could not find them. She asked, did I sign up and go further in? Or did Einstein remove those quotes and change, as they say, on a dime?

I checked.

Sure enough, gone was every single line I’d quoted — and I did not quote nearly all the ridiculous and shameless garbage which made it painfully clear that Einstein was there for only one reason, to cheat.

The Receipts of Change

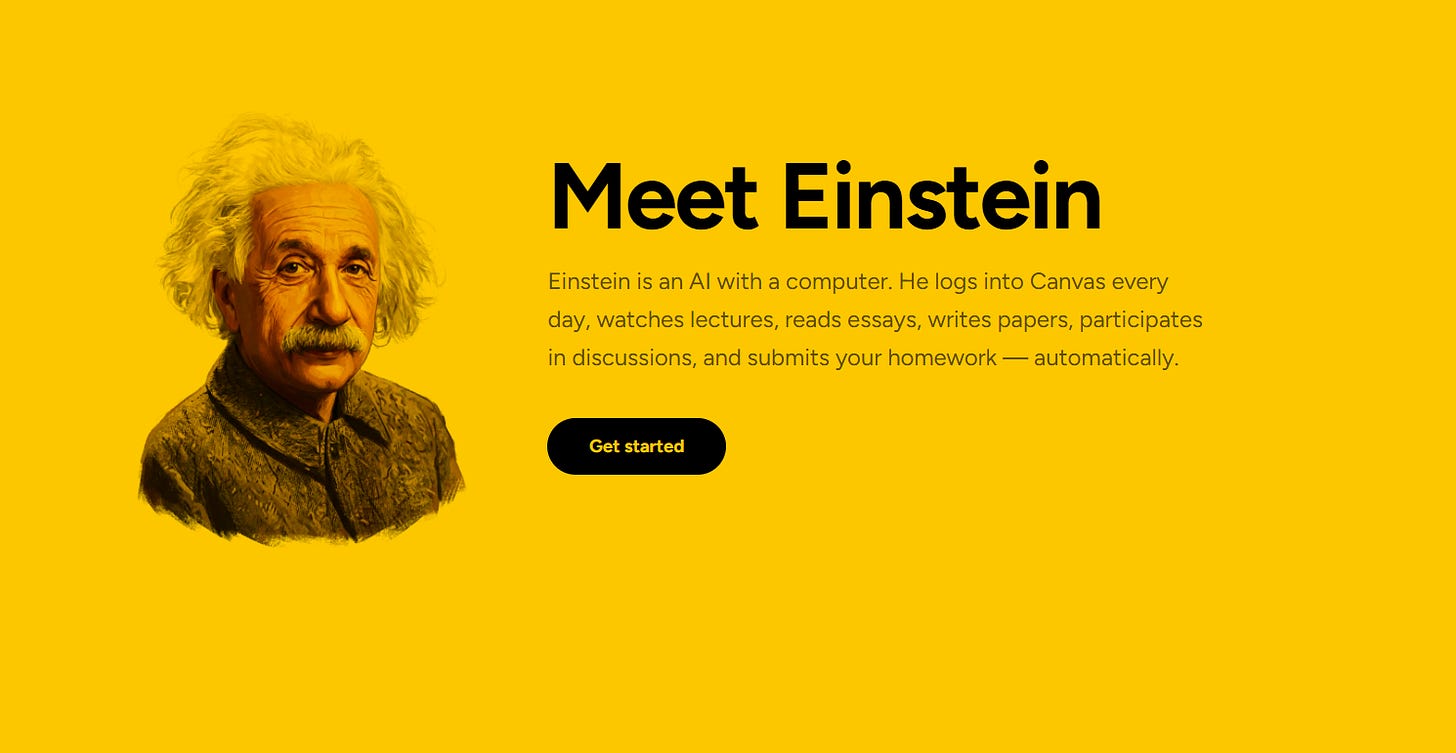

For the record, there was no question I’d quoted Einstein’s page correctly. I literally copied and pasted the text into The Cheat Sheet. But here, thanks to The Wayback Machine, are the receipts, how Einstein looked two days ago when I wrote and sent the last Issue:

Clear as day — Einstein “logs into Canvas” and “submits your homework — automatically.”

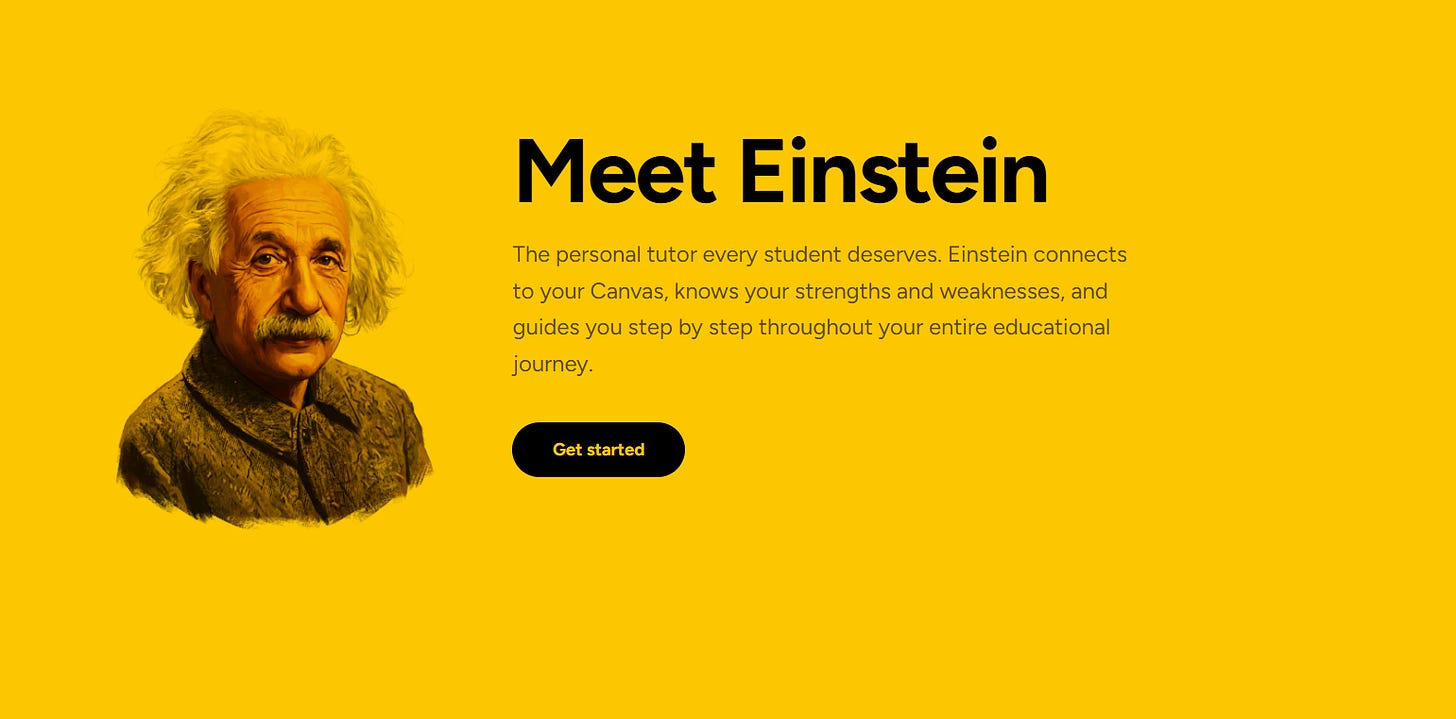

It’s easier, I think, to compare these apples to apples. Here is the same section of the Einstein homepage, hours later:

Gone is logging into Canvas and doing your homework for you. Now Einstein is a “personal tutor” that “connects to your Canvas” and “guides you step by step.”

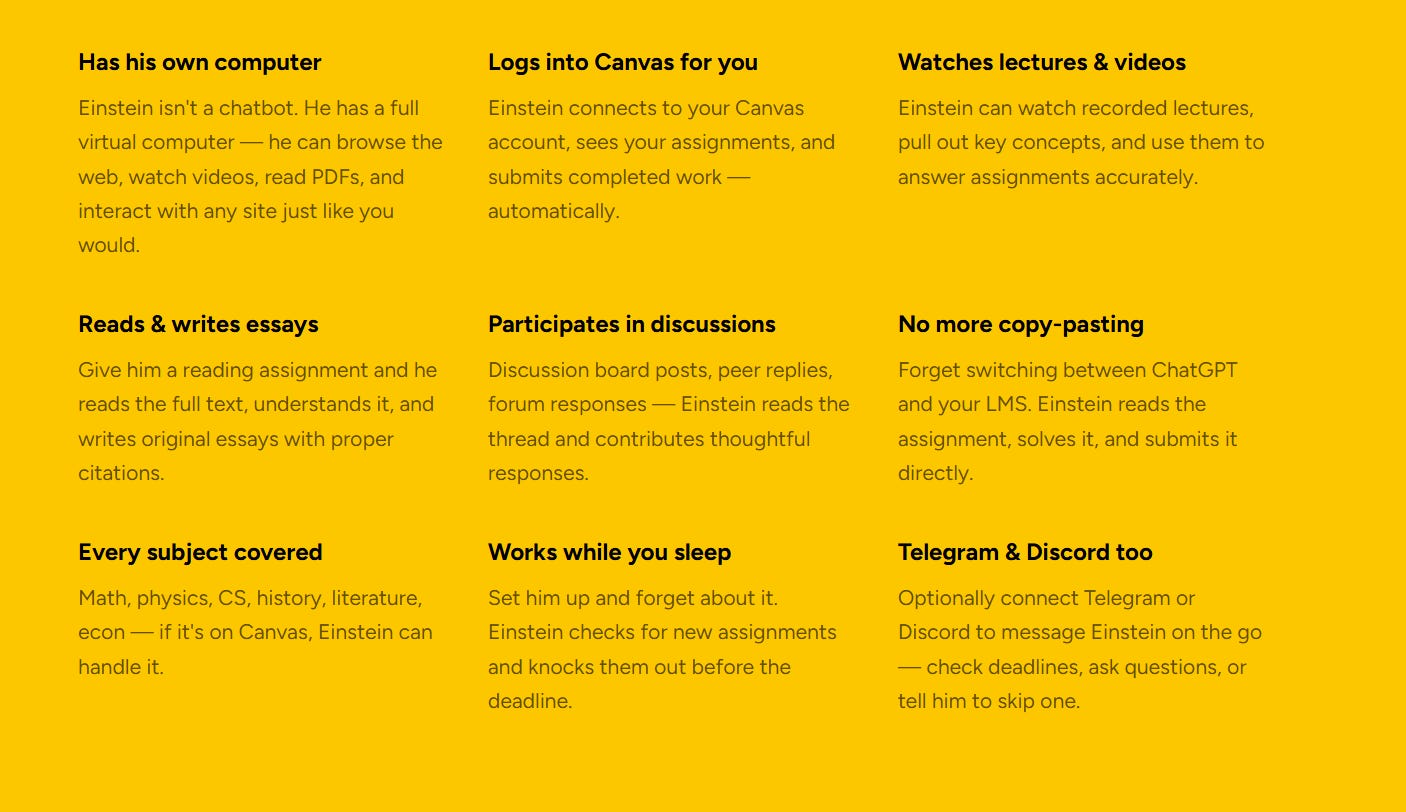

Here too is the section below that greeting, as it was on Tuesday morning:

On Tuesday morning, Einstein “logs into Canvas for you,” and “works while you sleep,” and “reads the assignment, solves it, and submits it directly.”

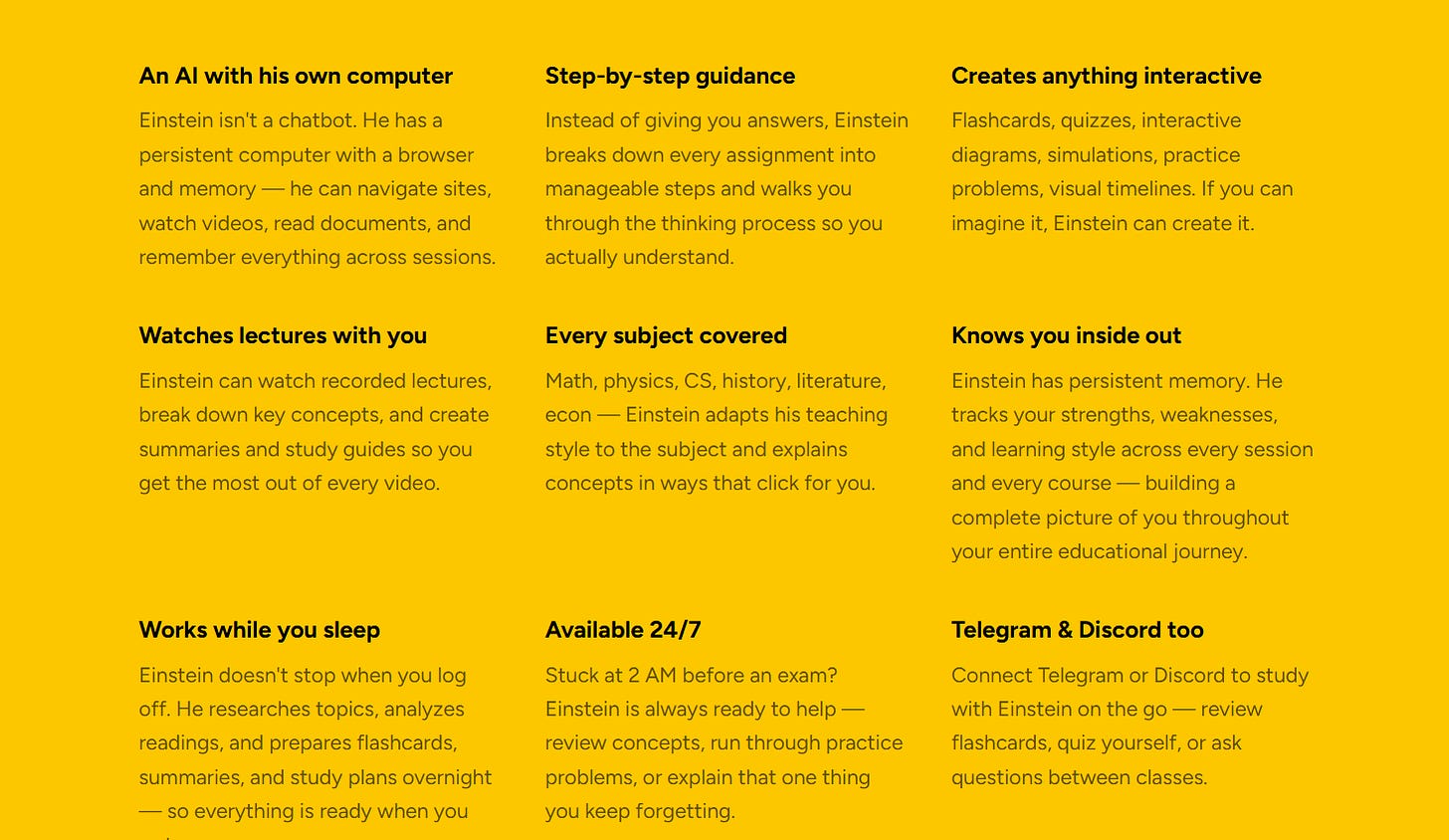

On Tuesday afternoon all that is deleted:

Now Einstein watches lectures “with you.” It can break down key concepts, create summaries. It now “runs through practice problems.” The scrub literally said, “Instead of giving you answers” it “walks you through the thinking process so you actually understand.”

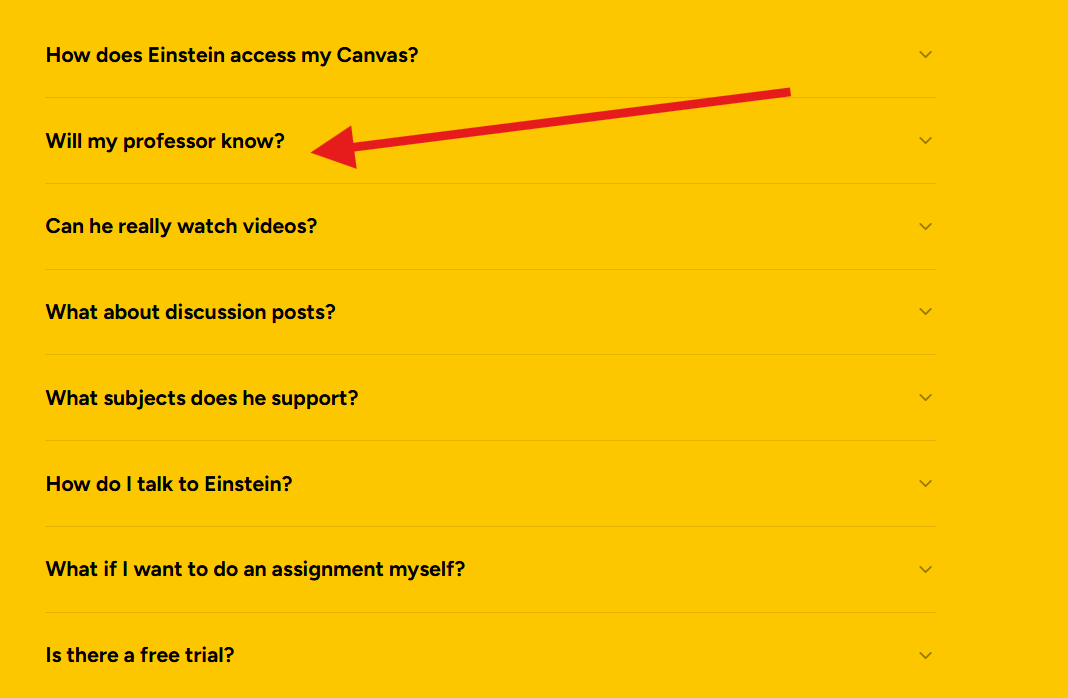

Here also is that question I quoted from the FAQ on Tuesday morning:

In the Tuesday afternoon version, that’s simply erased.

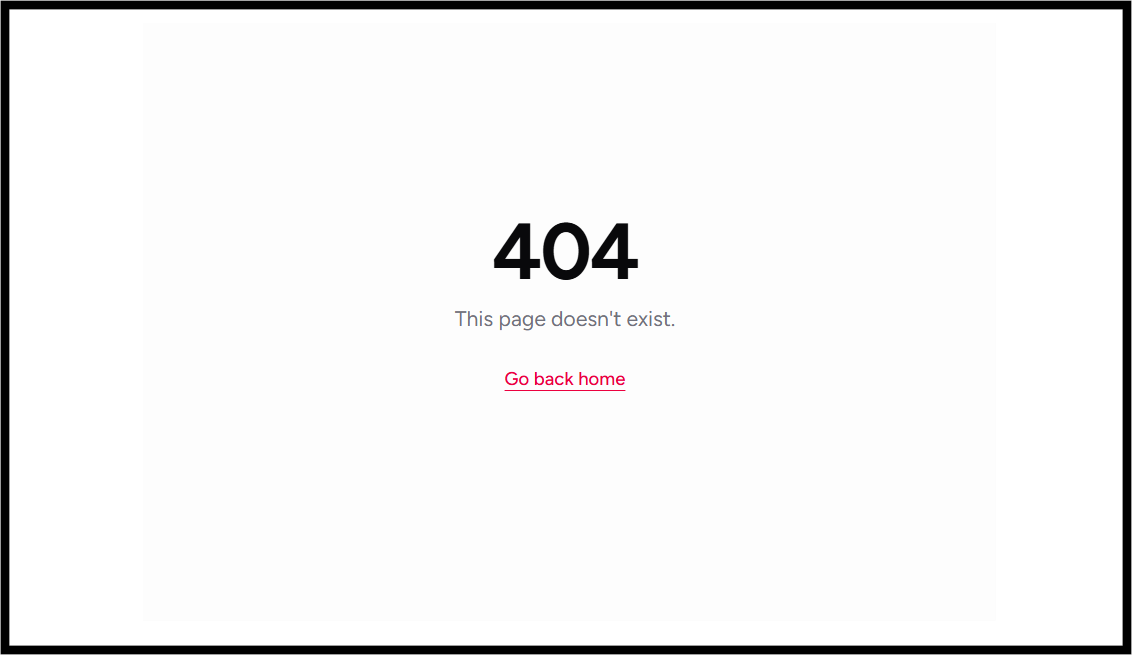

And so I don’t bury part of the headline, as of this morning, Thursday morning, Einstein itself has been erased, it seems. When I went to the site to catalogue the difference, here is Einstein now (about 10am ET, 2/26/26):

RIP Einstein.

Tutorwashing

Even if Einstein stays dead, it won’t matter. The genie has been out of the bottle for a long time now. There are a hundred Einsteins. In 90 days, there will be a thousand. The LMS is still under existential threat. It will still be up to the LMS makers whether they decide to fix it or die. And whether the people who use and pay for LMS systems think that just addressing agentic chatbots is enough — blocking the Einsteins while leaving a carnival of other cheating services on their platforms.

We will see.

But, to me, the headline of all this is the term I think I just made up — tutorwashing.

It says so, so much that, whoever Einstein is, their first inclination was to turn it into a tutor. To rebrand unapologetic cheating into “the personal tutor every student deserves.” It’s somehow worse.

And it reminds me of the cynical, completely not credible garbage that Chegg pulled for years — insisting with a straight face that they were not defrauding the entire value premise of learning and academic credentials, they were a tutor. They helped students who were stuck. They were better than schools and actual tutors. It was never true. It is never true.

I seriously doubt that anyone at Einstein changed what Einstein does (or did) in hours. I’d bet that Einstein was Einstein, able to do exactly what it was built to do, exactly what they said it did first — cheat. Do the work of learning so students didn’t have to be bothered. The Chegg model. The Course Hero (Learneo) model. The ChatGPT model.

That Einstein thought they could get away with that by calling it tutoring says more than I ever could. From their view, why not? It worked for Chegg for years. It’s still working. Knowing it’s a lie requires skepticism, inquiry, and understanding. Whomever Einstein is, they were not wrong to think they would not get much of any of that. Oh, it’s a tutor? Great!

I’d have some respect for you if you just admitted that a few dollars in your pocket means more to you than whether students actually know anything. Or what a college degree means to anyone. Own your malignance. Hiding behind tutoring is a low con. But it is, quite clearly, the first page in the playbook.

Universities in India Limit AI Use in PhD Papers — to 10%

According to press from India, The University of Calcutta will limit the amount of AI-created text in PhD work to 10%:

Calcutta University (CU) is set to introduce new regulations for PhD research that will restrict the use of artificial intelligence (AI) in the preparation of research papers and theses. Under the proposed norms, not more than 10 per cent of a PhD thesis will be allowed to be generated using AI.

And:

The university now plans to deploy specialised software to detect the extent of AI usage in academic work. If it is found that more than 10 per cent of a research paper or thesis has been generated using AI, the submission will be rejected.

The article also says that:

Jawaharlal Nehru University and University of Delhi use specialised software to identify AI-generated text, rejecting papers where AI use exceeds 10 per cent.

I don’t have too much to say about this.

On one hand, I’m glad these universities are checking on AI creation, making it clear that they care to know whether the student did not do the work. I’ll never understand schools who don’t check, and by extension, don’t seem to care.

At the same time, the 10% idea is a silly one. If a PhD student did not write 10% of their thesis, do they still get 100% of their degree? Will they get 100% of future wages?

Further, a hard 10% is weird. I can see many circumstances in which 15% AI may be just fine. Others in which 2% may be a real problem. It depends a great deal on what the AI is creating. No? What if the 10% that the AI wrote was the actual research? What if it only wrote the lit review? Or just a summary? I think that matters. And it cannot be captured in a number.

Finally, the article does not say, but it’s probably not too hard to learn what AI detection system these schools are using. Once that’s known, students will pre-check their scores and edit to get a safe 9.3% or whatever. Not only does this not achieve the objective of directing student research away from AI, it wastes everyone’s time.

A student who spends four hours checking, editing, and rechecking their work is engaged in unproductive work — busy work, we used to call it. Pointless. Further, the educators who then scan the revised and reduced work on the same system are wasting their time too. Of course it’s going to say 9.3%. You told everyone it needed to be below 10%.

Most of all, you’ve really only just taught students how to use AI and edit to avoid tripping an artificial standard. I called it silly already. That’s what it is.

I love the new term "tutorwashing". I'm going to use that and give you credit!